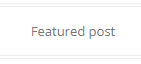

There are a couple of things I do not like with the default WordPress UI, namely the “Featured Post” header:

and the “Proudly powered by WordPress” quote at the bottom of the page.

Here are the hacks I use to remove them

1)

- Featured Post:

- Appearance > Theme Editor > content.php

- HTML Comment out the block :

<!--<?php if ( is_sticky() && is_home() && ! is_paged() ) : ?>

<div class="featured-post">

<?php _e( 'Featured post', 'twentytwelve' ); ?>

</div>

<?php endif; ?>-->

- Proudly powered by WordPress:

- Appearance > Theme Editor > footer.php

- HTML Comment out the div class=”site-info” block :

<!--<div class="site-info">

<?php do_action( 'twentytwelve_credits' ); ?>

<?php

if ( function_exists( 'the_privacy_policy_link' ) ) {

the_privacy_policy_link( '', '<span role="separator" aria-hidden="true"></span>' );

}

?>

<a href="<?php echo esc_url( __( 'https://wordpress.org/', 'twentytwelve' ) ); ?>" class="imprint" title="<?php esc_attr_e( 'Semantic Personal Publishing Platform', 'twentytwelve' ); ?>">

<?php

/* translators: %s: WordPress */

printf( __( 'Proudly powered by %s', 'twentytwelve' ), 'WordPress' );

?>

</a>

</div>--><!-- .site-info -->